Automated Creation of Docker Containers

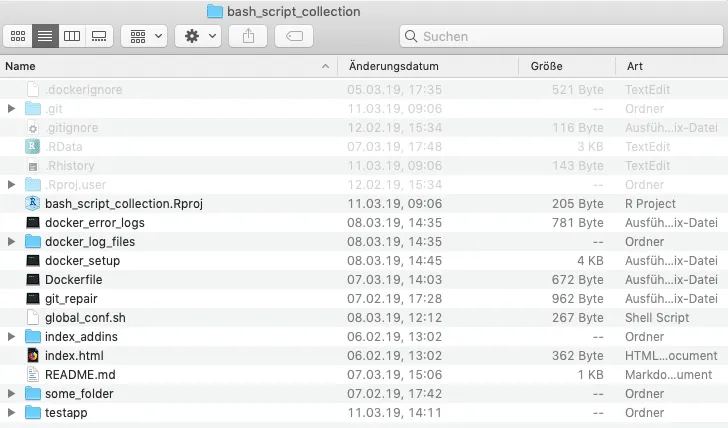

In the last Docker tutorial Olli presented how to build a Docker image of R-Base scripts with rocker and how to run them in a container. Based on that, I’m going to discuss how to automate the process by using a bash/shell script. Since we usually use containers to deploy our apps at statworx, I created a small test app with R-shiny to be saved in a test container. It is, of course, possible to store any other application with this automated script as well if you like. I also created a repository at our blog github, where you can find all files and the test app.

Feel free to test and use any of its content. If you are interested in writing a setup script file yourself, note that it is possible to use alternative programming languages such as python as well.

The idea behind it

$ docker-machine ls

NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS

Dataiku - virtualbox Stopped Unknown

default - virtualbox Stopped Unknown

ShowCase - virtualbox Stopped Unknown

SQLworkshop - virtualbox Stopped Unknown

TestMachine - virtualbox Stopped Unknown

$ docker-machine start TestMachine

Starting "TestMachine"...

(TestMachine) Check network to re-create if needed...

(TestMachine) Waiting for an IP...

Machine "TestMachine" was started.

Waiting for SSH to be available...

Detecting the provisioner...

Started machines may have new IP addresses. You may need to re-run the `docker-machine env` command.

$ eval $(docker-machine env --no-proxy TestMachine)

$ docker ps -a

CONTAINER ID IMAGE COMMAND CREATED

cfa02575ca2c testimage "/sbin/my_init" 2 weeks ago

STATUS PORTS NAMES

Exited (255) About a minute ago 0.0.0.0:2000->3838/tcp testcontainer

$ docker start testcontainer

testcontainer

$ docker ps

...Building and rebuilding Docker images over and over again every time you make some changes to your application can get a little tedious at times, especially if you type in the same old commands all the time. Olli discussed the advantage of creating an intermediary image for the most time-consuming processes, like installing R packages to speed things up during content creation. That’s an excellent practice, and you should try and do this for every project viable. But how about speeding up the containerisation itself? A small helper tool is needed that, once it’s written, does all the work for you.

The tools to use

To create Docker images and containers, you need to install Docker on your computer. If you want to test or use all the material provided in this blog post and on our blog github, you should also install VirtualBox, R and RStudio, if you do not already have them. If you use Windows (10) as your Operating System, you also need to install the Windows Subsystem for Linux. Alternatively, you can create your own script files with PowerShell or something similar.

The tool itself is a bash/shell script that builds and runs docker containers for you. All you have to do to use it, is to copy the docker_setup executable into your project directory and execute it. The only thing the tool requires from you afterwards is some naming input.

If the execution for some reason fails or produces errors, try to run the tool via the terminal.

source ./docker_setupTo replicate or start a new bash/shell script yourself, open your preferred text editor, create a new text file, place the preamble #!/bin/bash at the very top of it and save it. Next, open your terminal, navigate to the directory where you just saved your script and change its mode by typing chmod +x your_script_name. To test if it works correctly, you can e.g. add the line echo 'it works!' below your preamble.

#!/bin/bash

echo 'it works!'If you want to check out all available mode options, visit the wiki and for a complete guide visit the Linux Shell Scripting Tutorial.

The code that runs it

If you open the docker_setup executable with your preferred text editor, the code might feel a little overwhelming or confusing at first, but it is pretty straight forward.

#!/bin/bash

# This is a setup bash for docker containers!

# activate via: chmod 0755 setup_bash or chmod +x setup_bash

# navigate to wd docker_contents

# excute in terminal via source ./setup_bash

echo ""

echo "Welcome, You are executing a setup script bash for docker containers."

echo ""

echo "Do you want to use the Default from the global configurations?"

echo ""

source global_conf.sh

echo "machine name = $machine_name"

echo "container = $container_name"

echo "image = $image_name"

echo "app name = $app_name"

echo "password = $password_name"

echo ""

docker-machine ls

echo ""

read -p "What is the name of your docker-machine [default]? " machine_name

echo ""

if [[ "$(docker-machine status $machine_name 2> /dev/null)" == "" ]]; then

echo "creating machine..."

&& docker-machine create $machine_name

else

echo "machine already exists, starting machine..."

&& docker-machine start $machine_name

fi

echo ""

echo "activating machine..."

eval $(docker-machine env --no-proxy $machine_name)

echo ""

docker ps -a

echo ""

read -p "What is the name of your docker container? " container_name

echo ""

docker image ls

echo ""

read -p "What is the name of your docker image? (lower case only!!) " image_name

echo ""The main code structure rests on nested if statements. Contrary to a manual docker setup via the terminal, the script needs to account for many different possibilities and even leave some error margin. The first if statement for example – depicted in the picture above – checks if a requested docker-machine already exists. If the machine does not exist, it will be created. If it does exist, it is simply started for usage.

The utilised code elements or commands are even more straightforward. The echo command returns some sort of information or a blank for better readability. The read command allows for user input to be read and stored as a variable, which in return enters all further code instances necessary. Most other code elements are docker commands and are essentially the same as the ones entered manually via the terminal. If you are interested in learning more about docker commands check the documentation and Olli‘s awesome blog post.

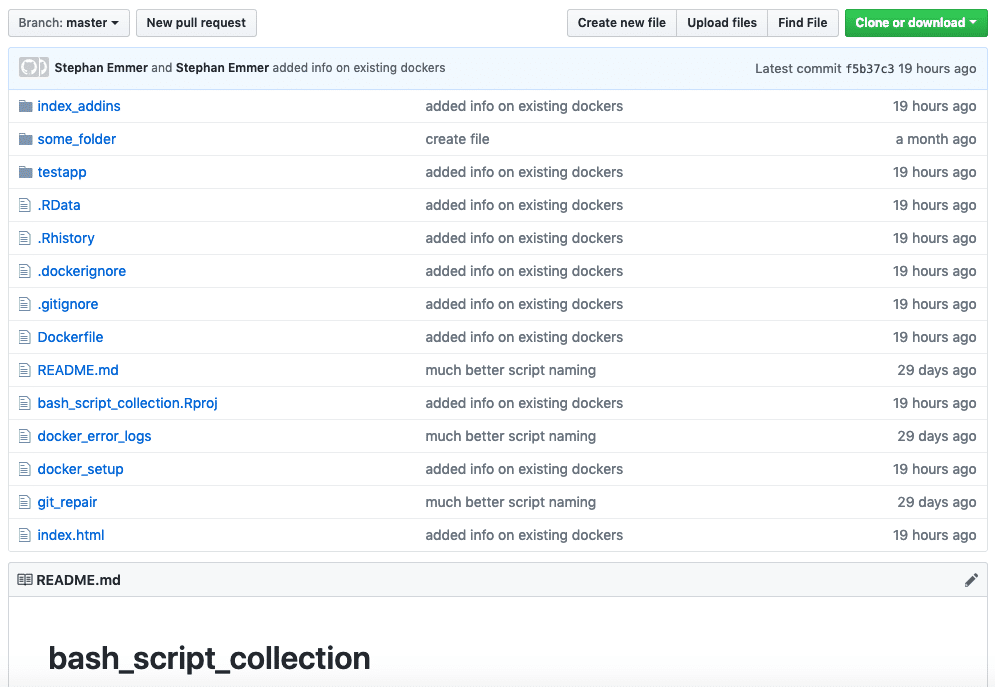

The git repository

The focal point of the Git Repository at our blog github is the automated docker setup, but also contains some other conveniences and hopefully will grow into an entire collection of useful scripts and bashes. I am aware that there are potentially better, faster and more convenient solutions for everything included in the repository, but if we view it as an exercise and a form of creative exchange, I think we can get some use out of it.

The docker_error_logs executable allows for quick troubleshooting and storage of log files if your program or app fails to work within your docker container.

The git_repair executable is not fully tested yet and should be used with care. The idea is to quickly check if your local project or repository is connected to a corresponding Git Hub repository, given an URL address, and if not to eventually ‘repair’ the connection. It can further manage git pulls, commits and pushes for you, but again please use carefully.

Next projects to come

As mentioned, I plan on further expanding the collection and usefulness of our blog github soon. In the next step I will add more convenience to the docker setup by adding a separate file that provides the option to write and store default values for repeated executions. So stay tuned and visit our statworx Blog again soon. Until then, happy coding.